Faster documentation check.

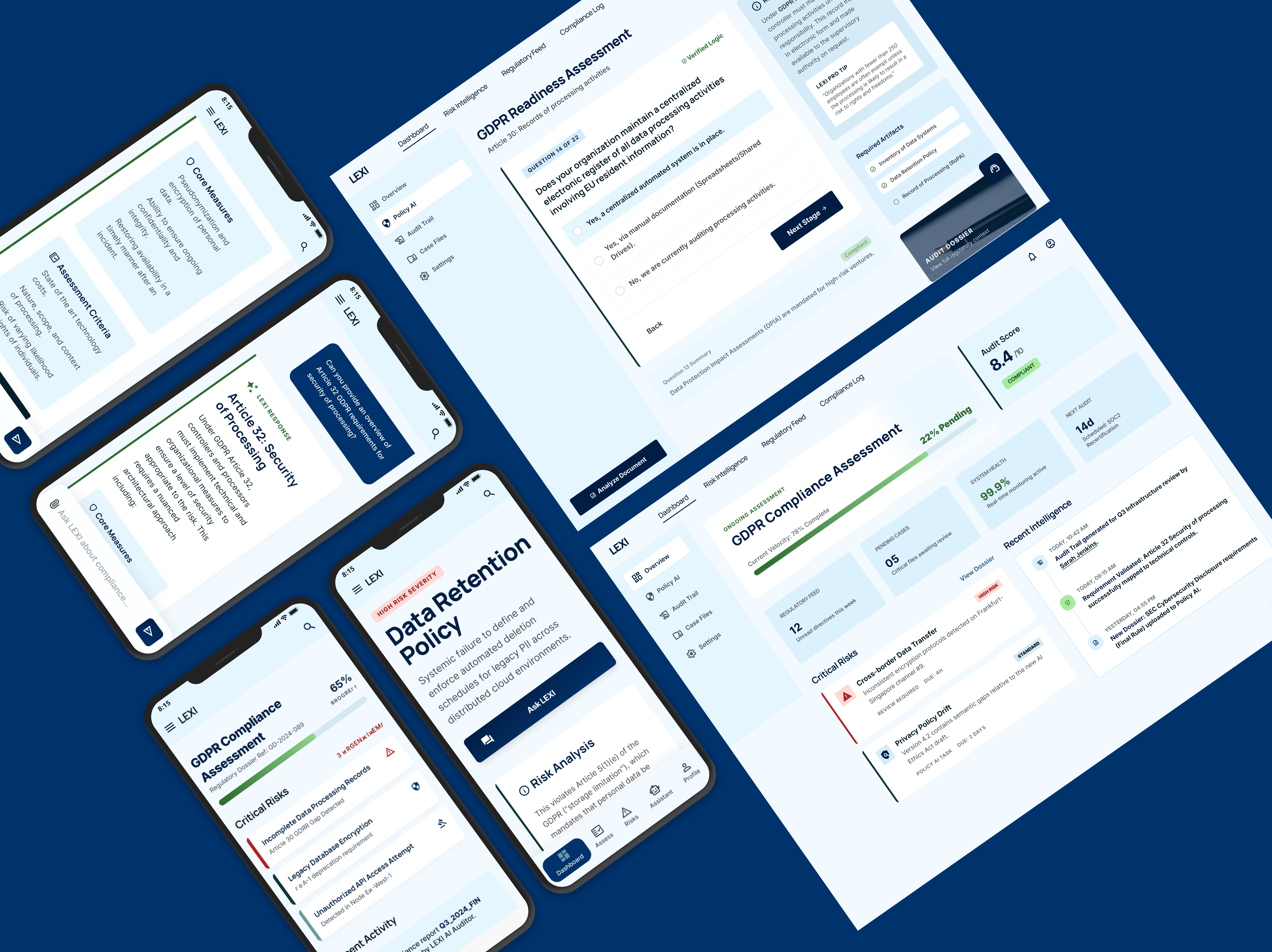

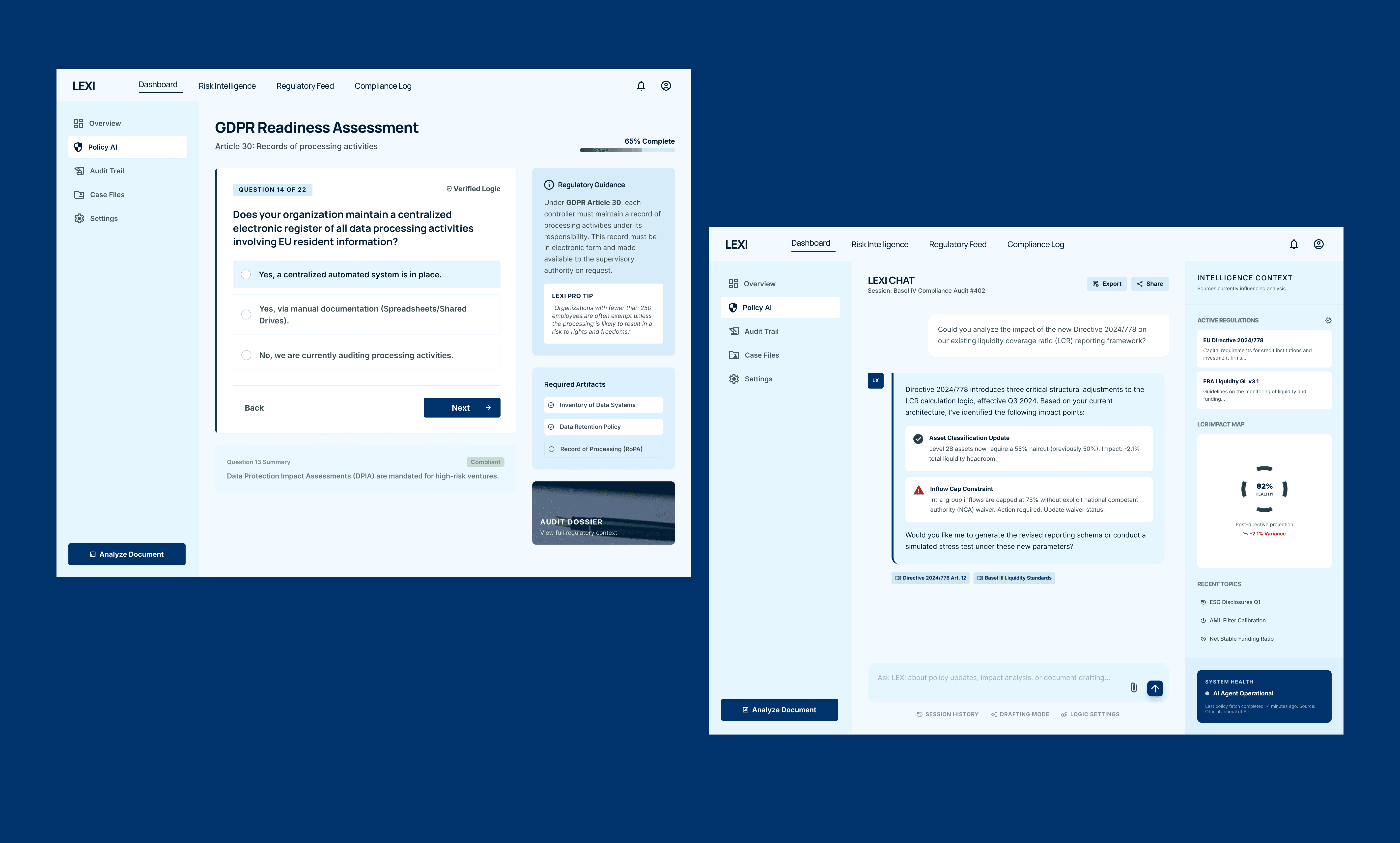

Lexi - the AI Compliance Assistant

Designing a decision support system in the form of a chat based AI application that helps non-legal teams navigate GDPR risk efficiently.

Role

Lead Product Designer

Industry

Conversational AI/ B2B SaaS

Duration

3 months

The Problem

Regulations like GDPR are complex, ambiguous, and constantly evolving. But the people making daily decisions that touch compliance: product managers, marketers, HR teams are not lawyers. They ask questions like this every day:

"Can we email this list?"

"Can we track this behavior?"

"Can we store this data?"

Right now, the answer to those questions either goes through a legal team (slow, bottleneck) or gets guessed (fast, risky). Existing tools like internal wikis, PDFs or static documentation are not built for conversational, contextual decision-making.

The result: legal teams are overwhelmed. Non-legal teams are uncertain.

"The problem isn't a lack of information. It's a lack of the right information, at the right moment, in a form people can actually act on."

The solution

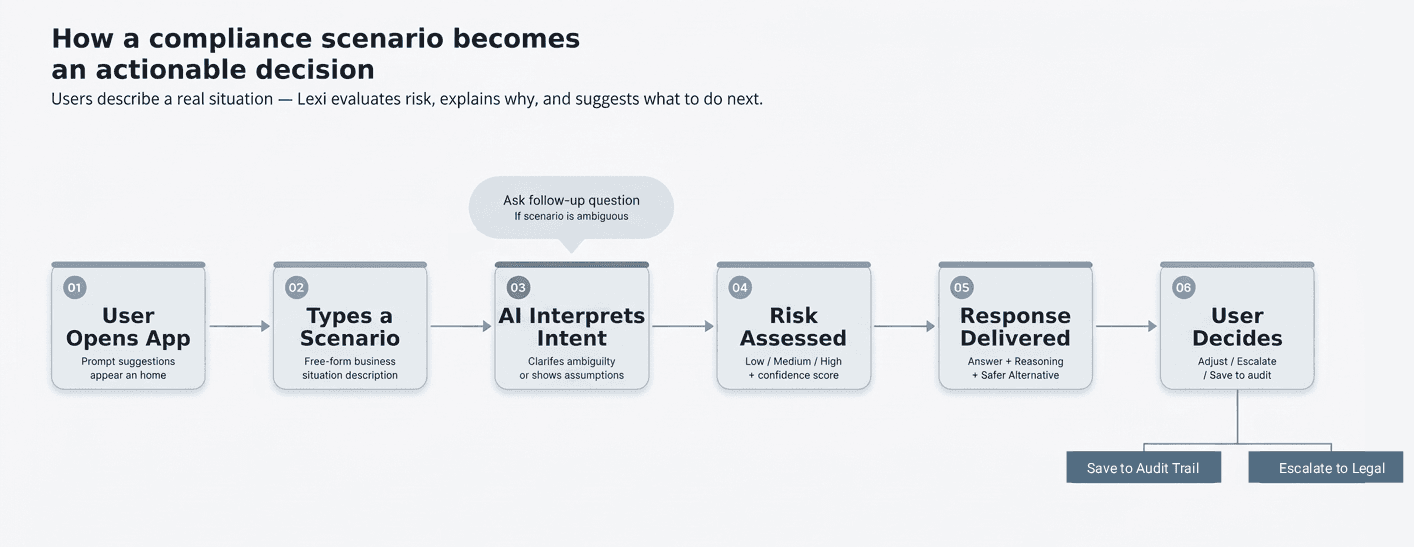

Lexi is a chat-based AI assistant built for one specific job: helping non-legal employees assess compliance risk before they act — not after.

Users describe a real business scenario in plain language. Lexi evaluates the risk, explains why, and — crucially — suggests a safer path forward. It doesn't just say no. It helps people move.

This distinction matters. Most compliance tools are gatekeepers. Lexi is a navigator.

Key UX decisions

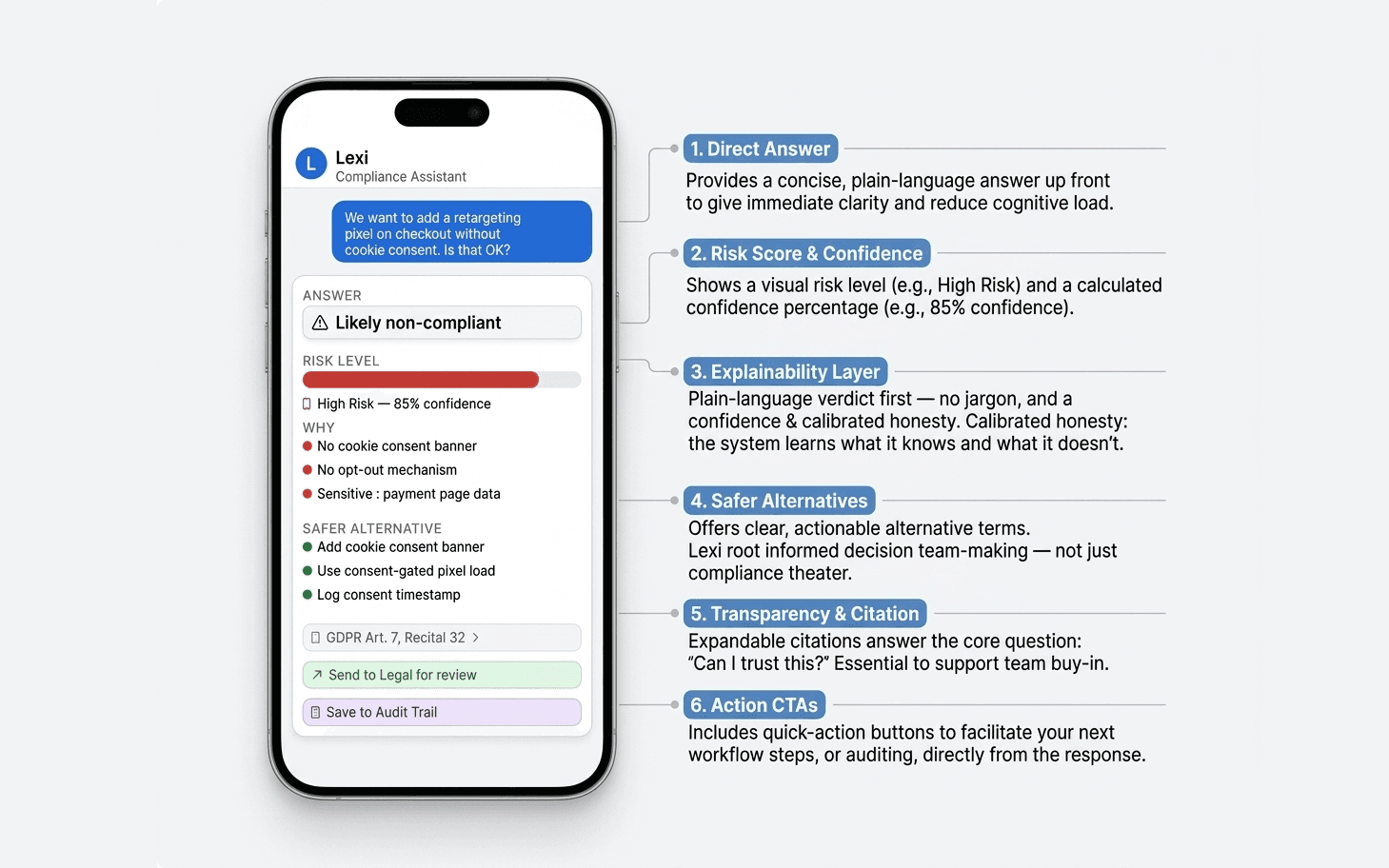

Decision 1: Layered information architecture

Legal accuracy and usability are often in direct tension. Too simple, and the tool is dangerous. Too detailed, and no one uses it.

My solution was to design a layered disclosure model — a clear verdict at the top, with reasoning and sources available on demand. The user gets what they need immediately, but can go deeper if they want accountability or want to share the rationale with their team.

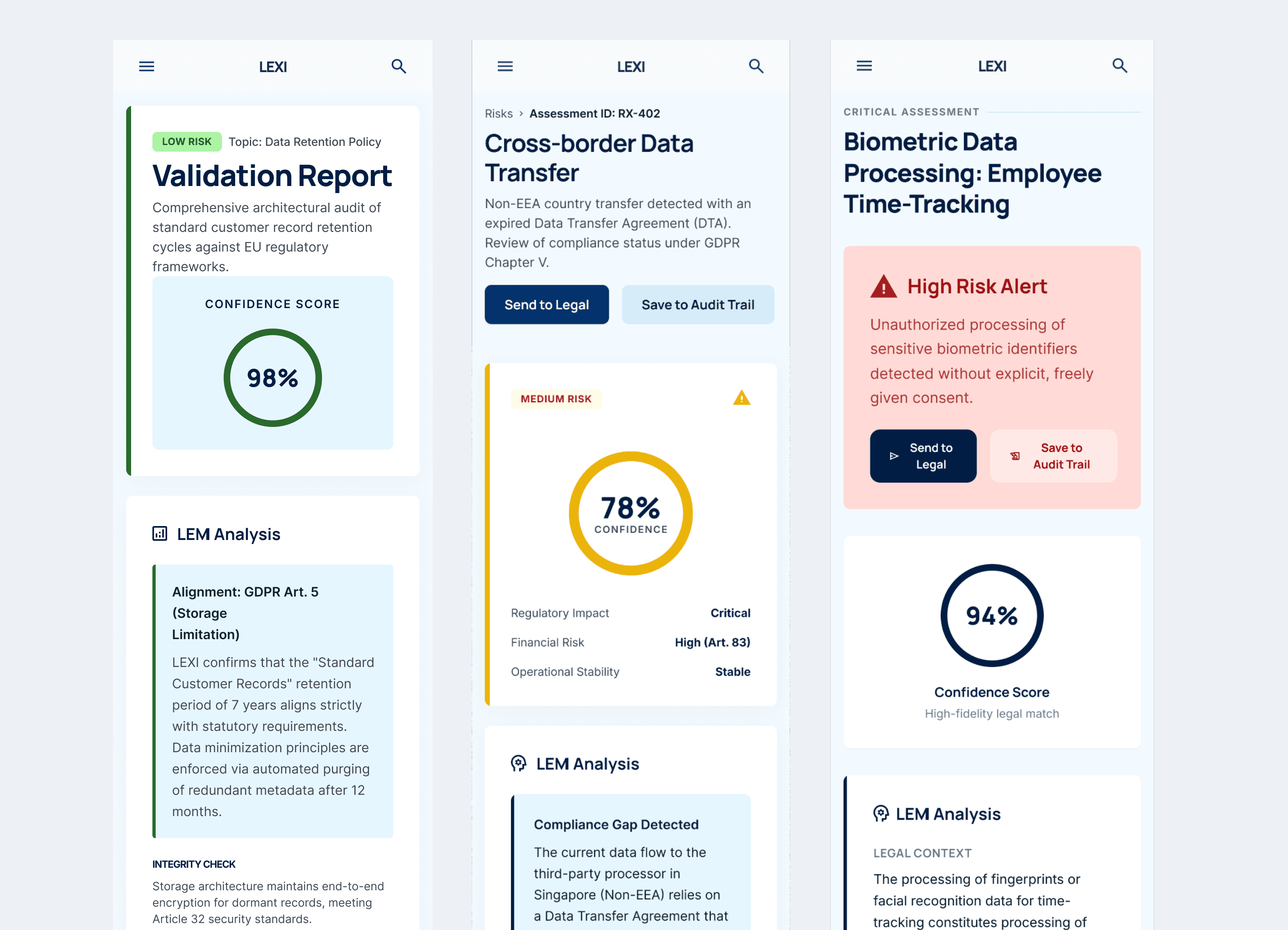

Decision 2: Risk score with confidence level

A risk score alone ("High Risk") creates false certainty. Legal interpretation is inherently probabilistic - a 55% confidence answer needs to look and feel different from an 85% one.

By pairing the risk color (red / yellow / green) with a confidence percentage, Lexi communicates calibrated uncertainty. It's a small design detail, but it does significant trust-building work: it tells the user that the system knows what it doesn't know.

Decision 3: "Safer Alternative" as the core differentiator

The "Safer Alternative" section is the most important part of the response — not the verdict, not the risk score. It's the part that makes the tool useful instead of just cautionary. It redirects the user toward a compliant path, which means the answer to "can we do this?" is often "not like that — but like this."

Decision 4: Escalation as a built-in behavior, not a failure state

When Lexi's confidence is low — typically because the scenario involves regional law variation or genuinely ambiguous facts — it doesn't just flag uncertainty. It offers a clear action: escalate to legal, with a pre-generated summary.

This does two things. First, it protects the user — they don't get a false answer in an edge case. Second, it protects the legal team — they receive structured, pre-analyzed context rather than a cold question.

Conclusions

Three challenges shaped most of the design decisions on this project:

Trust versus liability — Users need to trust the tool enough to act on it, but over-trust is dangerous. The design solution: confidence levels, explicit "AI is not legal advice" framing, and escalation paths that keep humans in the loop for edge cases.

Simplicity versus accuracy — Legal accuracy often requires nuance. The layered disclosure model (answer first, reasoning on demand) resolves this by serving both the user who needs a fast answer and the one who needs to document their reasoning.

Ambiguity handling — Users won't always ask precise questions. Lexi is designed to ask follow-up questions when a scenario is unclear, or to surface the assumptions it's making — so users know what the answer is actually based on.

Reflections

Designing AI interfaces for high-stakes professional contexts has made me think very differently about what "helpful" means, as it isn't always a confident answer. Sometimes it's a well-calibrated uncertainty and sometimes it's a handoff to a human. The interface is simple, but it is not neutral. In a compliance tool, every design choice like the weight of a color, the phrasing of a label, the presence or absence of a disclaimer has consequences.

Before this project, I worked on two AI-powered products in highly regulated environments ( e.g. supporting NATO documentation workflows) those projects shaped how I think about AI design in a very specific way: when the stakes are high, the interface is a layer of accountability.

Other projects

AI-Powered Documentation Harmonization Tool for Standards Development

Designing an AI-powered auditing experience for high-stakes defense documentation.

A Strategic Redesign of the MyAccount Shopper Platform

Transforming a legacy shopper portal into a streamlined self-service hub that reduces support overhead and empowers global users.