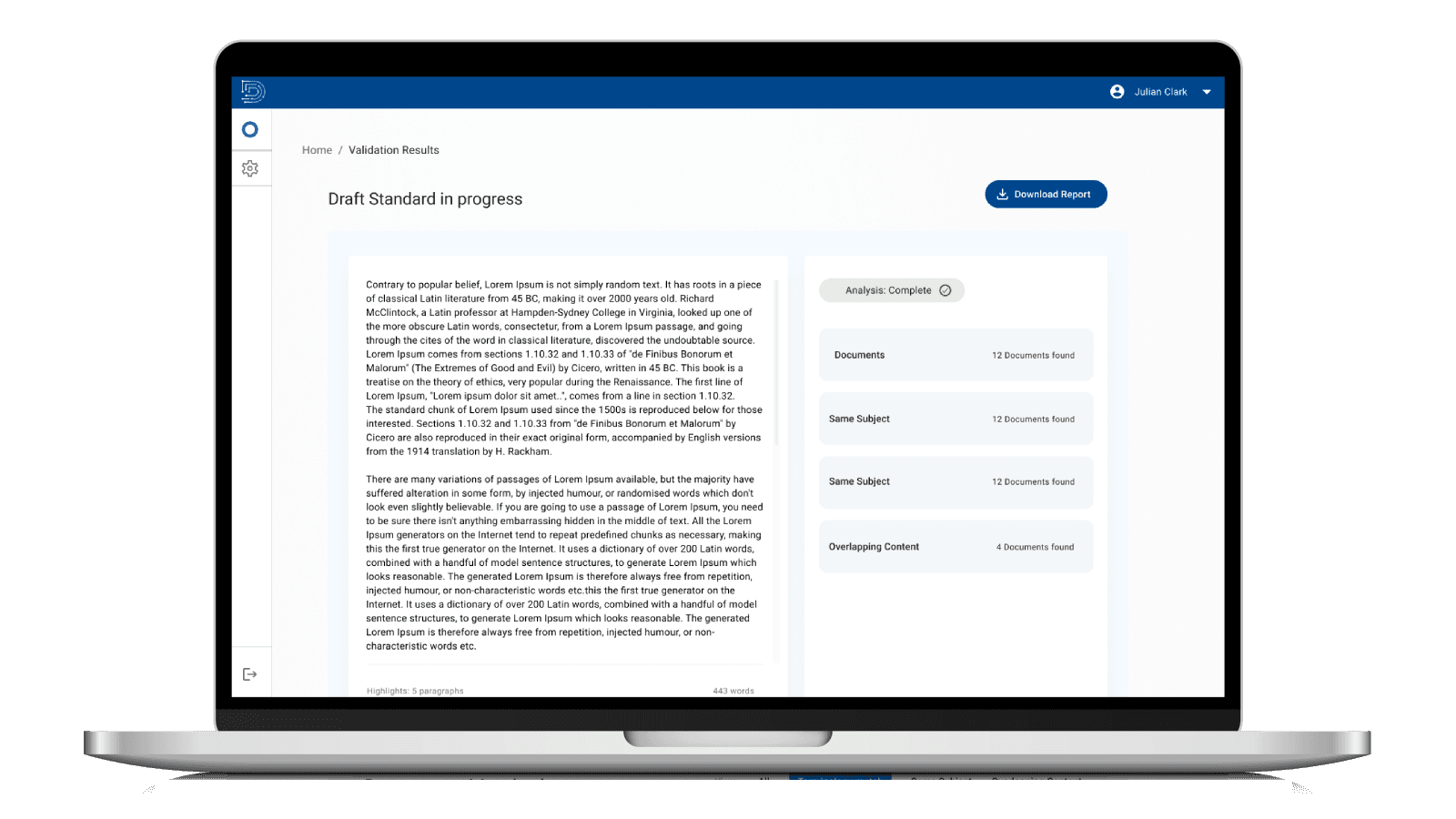

Faster documentation check.

AI-Powered Documentation Harmonization Tool for Standards Development

Designing an AI-powered auditing experience for high-stakes defense documentation.

Role

Lead Product Designer

Industry

AI / Artificial Intelligence in Governance/ GovTech

Duration

6 months

Overview

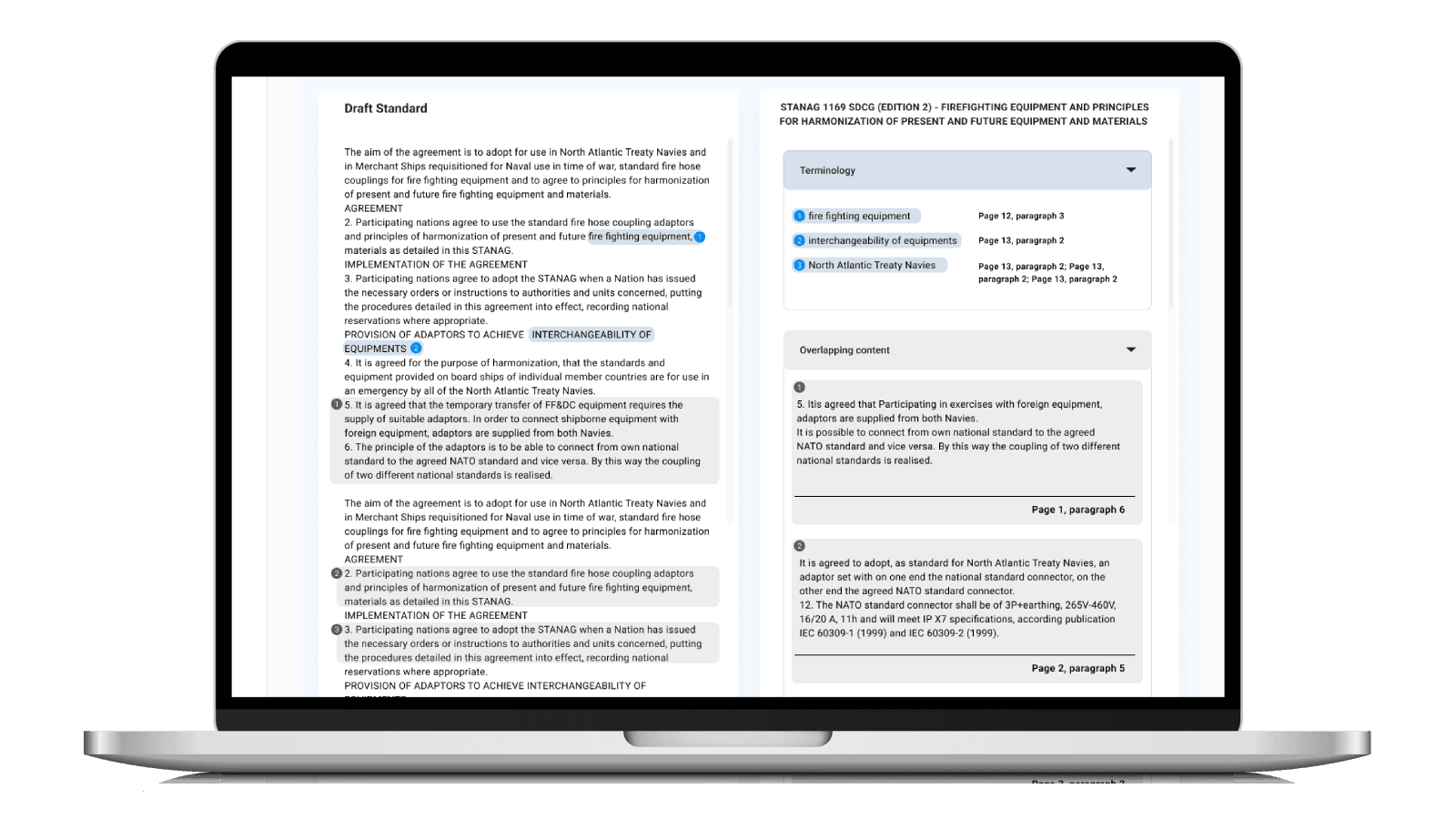

AI DEHZI (AI Document Examination & Harmonizer for Interoperability) is an AI-powered tool designed to support NATO standards custodians by automating the time-consuming process of finding overlapping standards, duplicated terminology, and conflicting content across official documentation.

The tool allows users to upload documents and instantly compare them against NATO’s NSDD database, surfacing relevant matches, semantic overlaps, contradictions, and terminology alignment — helping ensure harmonization during standards development.

This case study focuses on how I led end-to-end product design, from early user research and system framing to LLM training, feedback loops, and cross-functional collaboration with product and data science.

Challenges | Design Opportunity |

|---|---|

The process of custodians for developing and maintaining highly technical, interoperable documentation requires: Manually searching thousands of documents, time consuming. Identifying overlapping content and duplicated terminology. Detecting contradictions between standards, highly cognitive. Ensuring semantic alignment across domains. | The opportunity wasn’t just to “add AI” but to design a trusted AI collaborator that could: Surface relevant insights without overwhelming users. Fit into existing workflows. Provide explainable, verifiable results. Rank results by relevance and impact on current work. Improve over time through real user feedback. |

2. Design Process & Strategy

I followed a continuous discovery and validation model, rather than a linear “research → design → build” flow, because both user needs and model performance evolved throughout the project.

Discovery & User Research

Stakeholder Interviews Key Questions

How do you currently check for overlapping standards?

What’s the most frustrating part of your workflow?

What would make you trust an AI-generated result?

What would make you ignore it?

Key Insights

1. Search Fatigue

Users were spending hours manually searching for related documents — often unsure if they had “missed something important.”

2. Trust Over Speed

They didn’t want a fast answer — they wanted a verifiable one. Transparency mattered more than automation.

3. Cognitive Load

Raw search results weren’t helpful. Users needed structured, categorized and prioritized outputs.

Defining the Design Strategy

From research, I framed the product around three UX pillars:

1. Explainability

Every AI result must show why it was returned, not just what was found. Every result had a rating for the relevancy.

2. Control

Users should guide the system, not feel replaced by it.

3. Workflow Integration

The tool should fit into how standards are already written, reviewed, and shared.

This directly shaped both interface design and model behavior requirements.

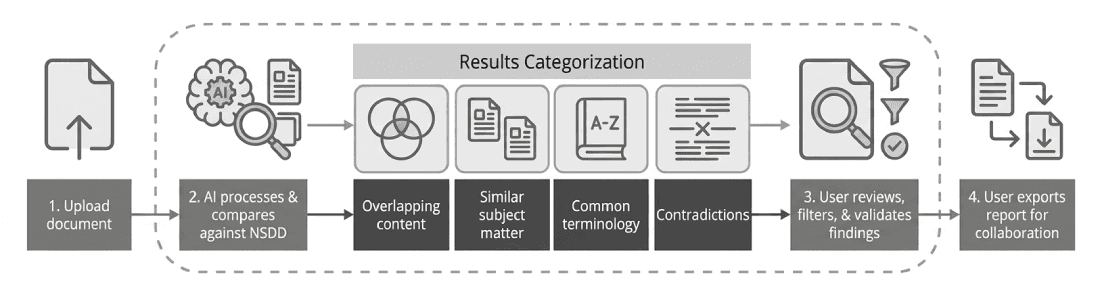

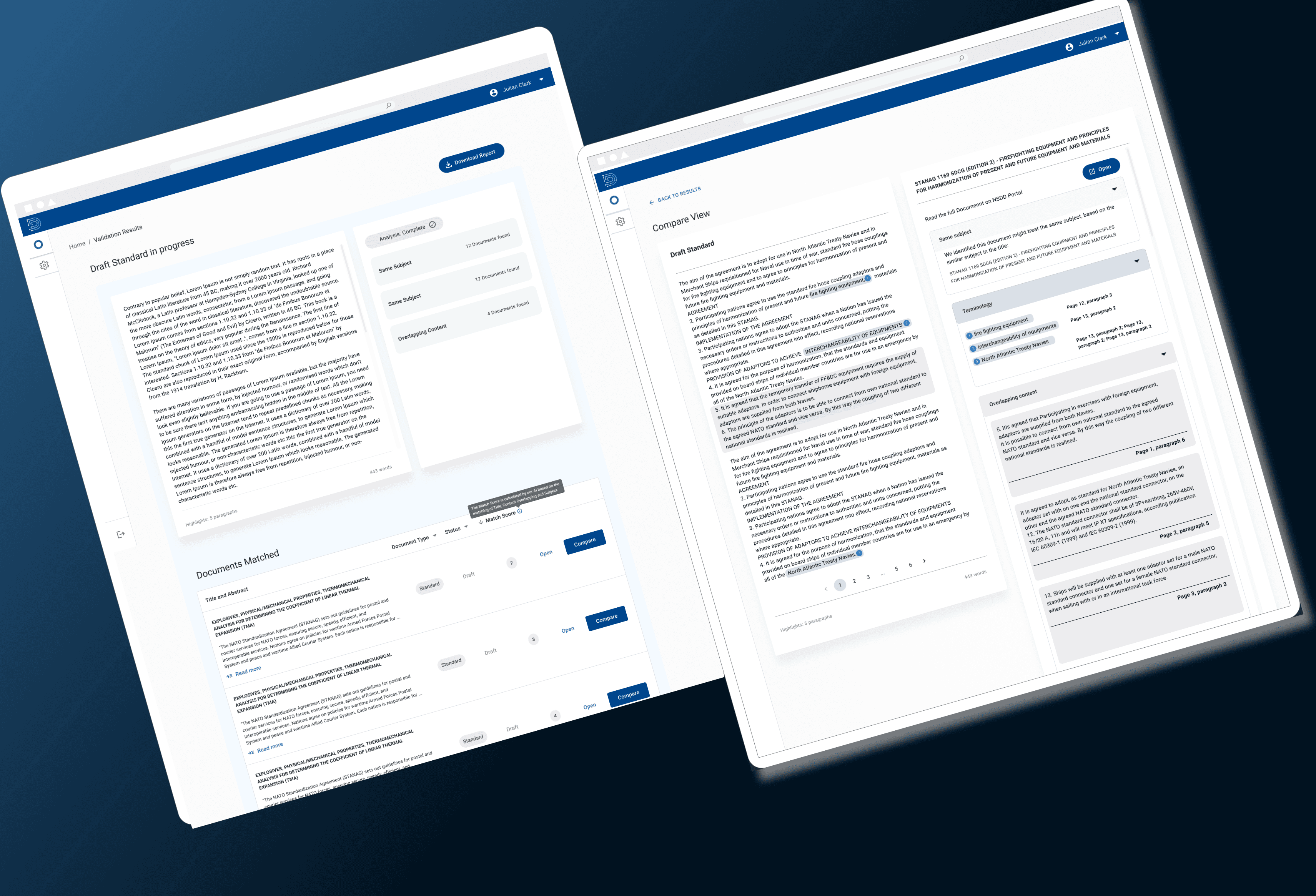

3. Mapping the AI System as a User Experience

I architected the core flow to prioritize human agency at every touchpoint—from ingestion to validation. By categorizing AI outputs into distinct cognitive layers (Overlaps, Contradictions, and Terminology), I transformed a complex data-science problem into a predictable, high-confidence workspace where the user remains the ultimate authority.

4. Key Design Decisions

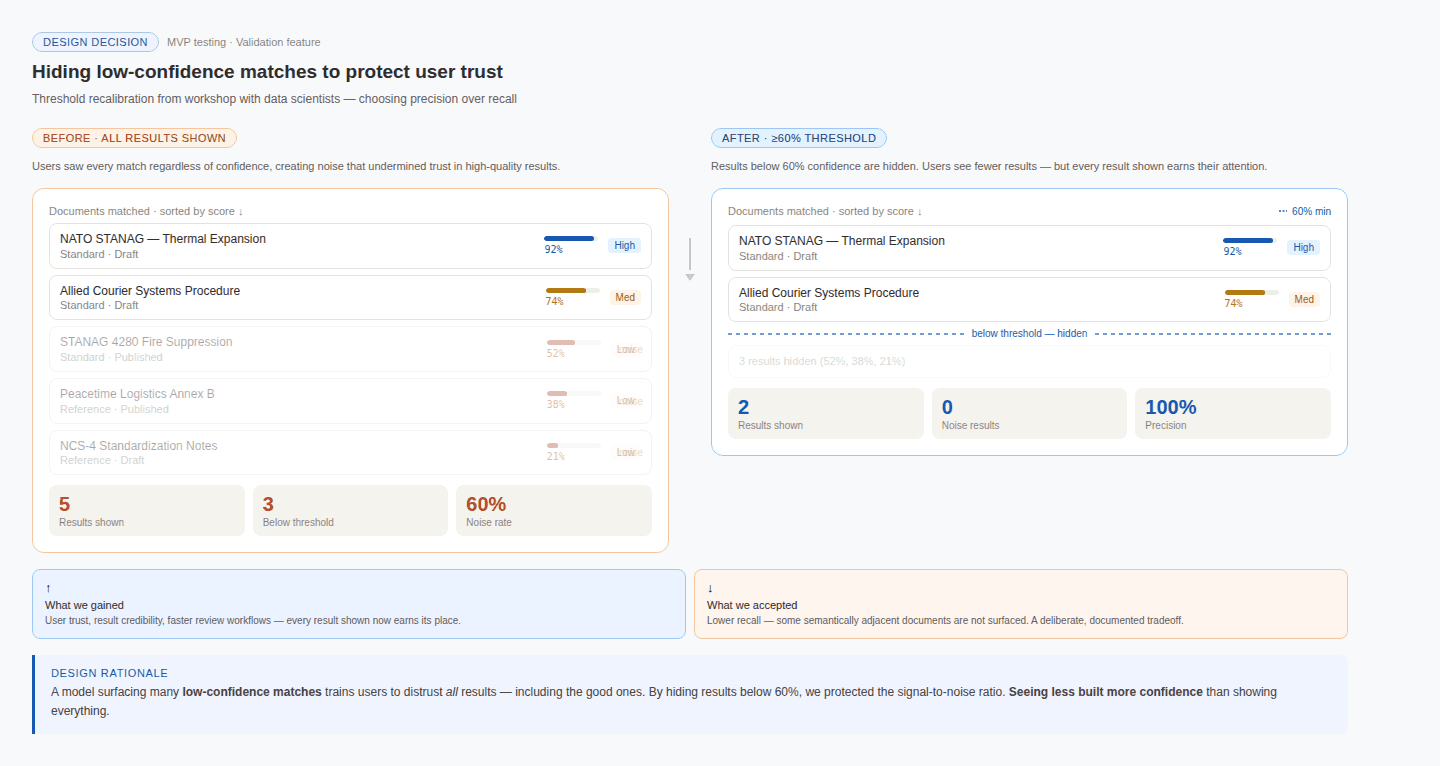

Deepening the Collaboration with Data Science

Problem | Solution |

|---|---|

During MVP testing, the model surfaced too many "similar" documents that lacked "semantic relevance," causing users to lose trust | I led a workshop with Data Scientists to re-calibrate the confidence thresholds. We decided to hide results below a 60% match score to reduce noise, even though it meant "seeing less"—a strategic decision to prioritize Precision over Recall to protect user trust. |

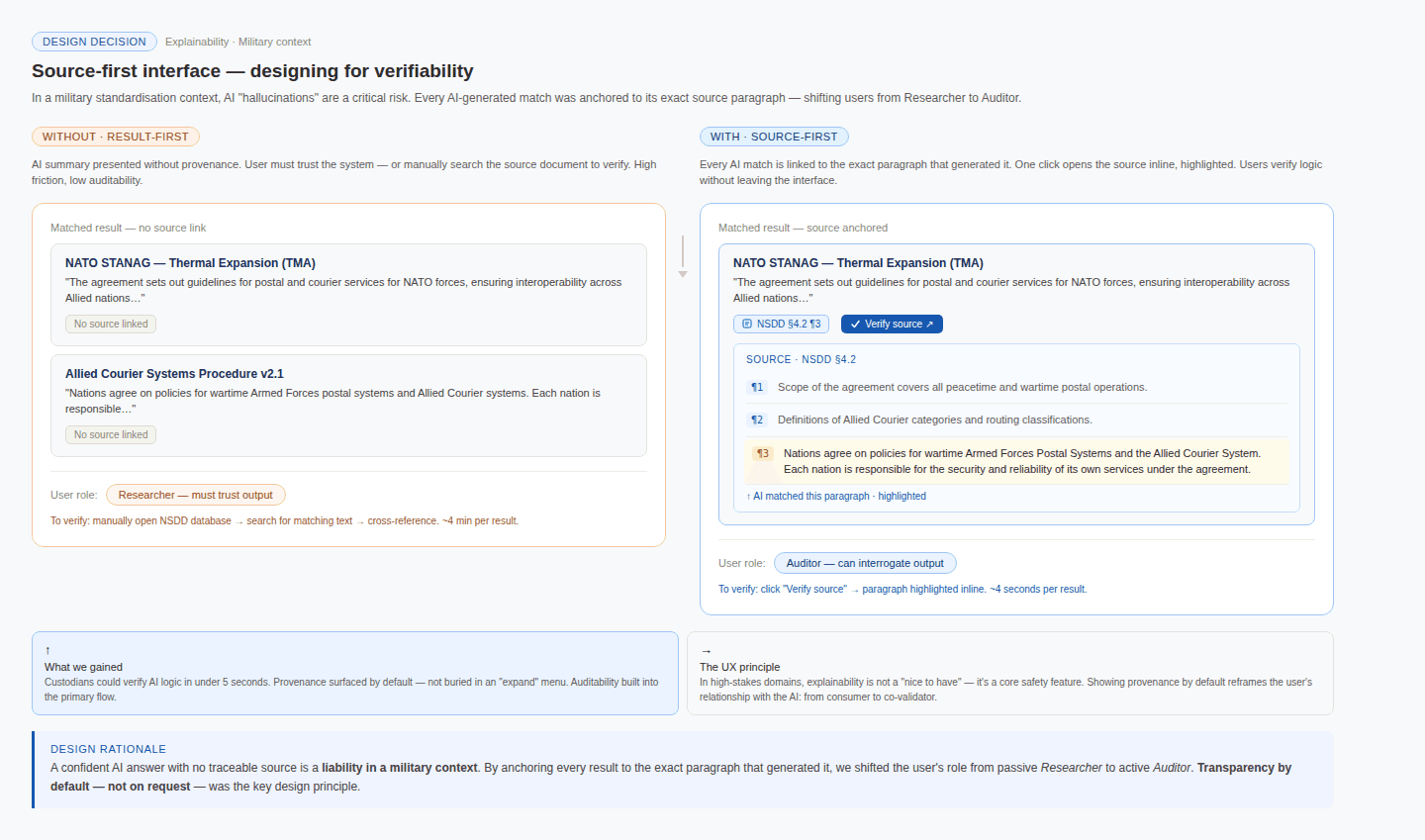

Explainability — Designing for Verifiability

Problem | Solution |

|---|---|

In a military standardisation context, an AI that confidently presents wrong information is more dangerous than one that presents no information at all. | I designed a "Source-First" interface where every AI-generated match was anchored to the exact paragraph in the original NSDD database that produced it. Custodians could verify the AI's reasoning in a single click, without leaving the interface. This deliberately shifted their role from passive Researcher — accepting results — to active Auditor, interrogating them. |

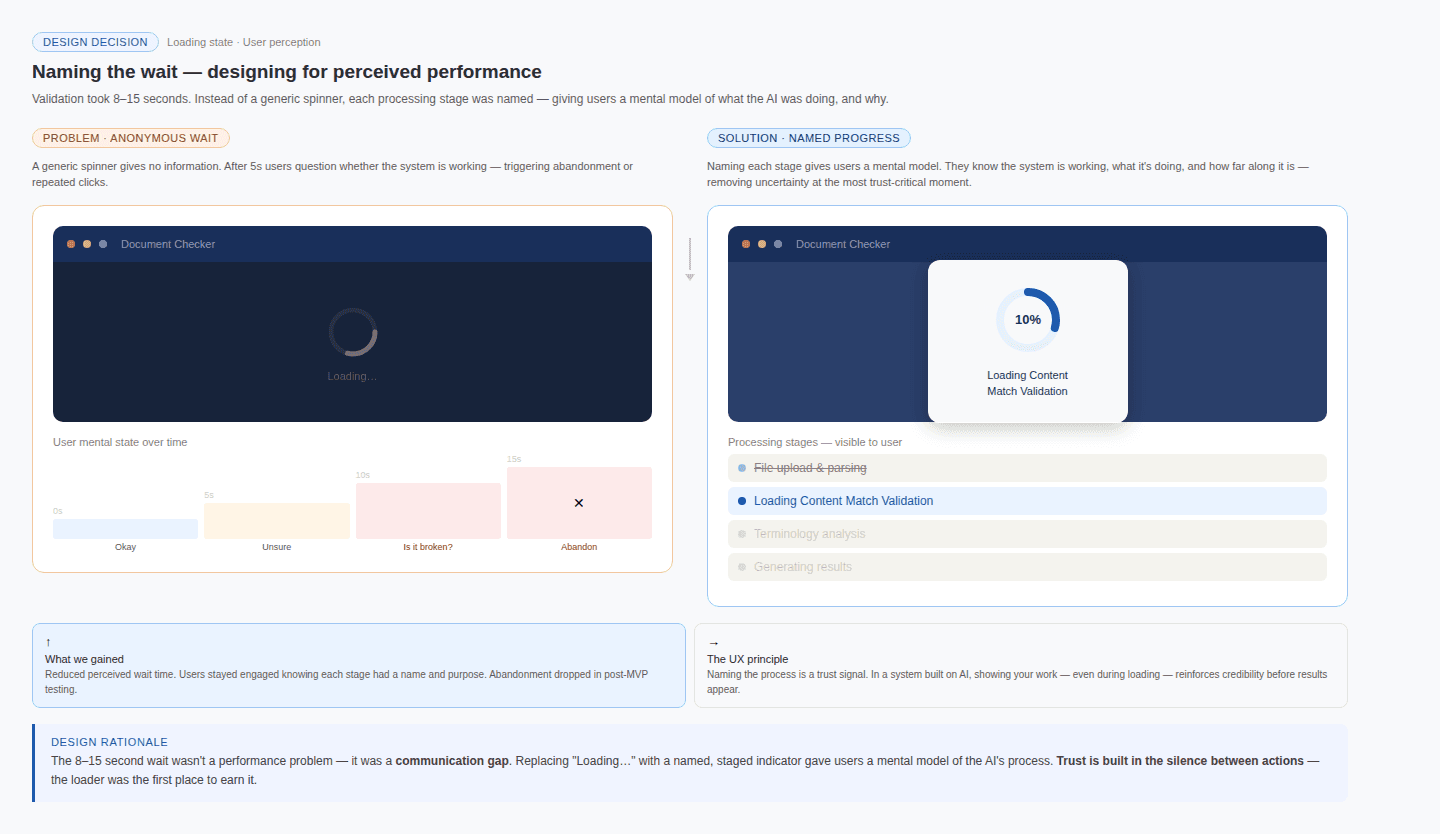

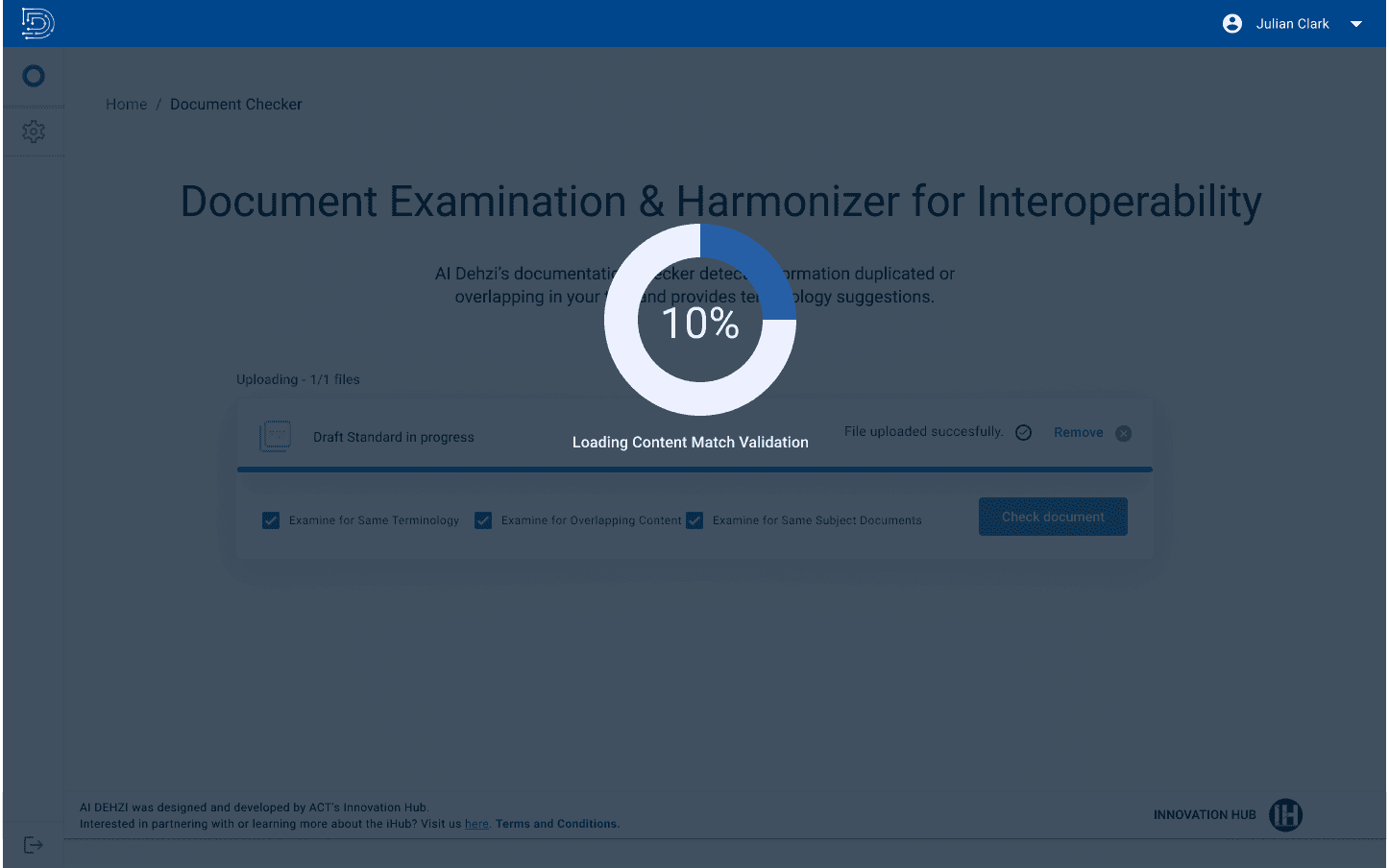

Loader — Designing for Perceived Performance

Problem | Solution |

|---|---|

During user testing, the validation process took 8–15 seconds to complete — long enough to cause uncertainty about whether the system was working. | Rather than display a generic spinner, I designed a labelled progress indicator that named each processing stage in plain language ("Loading Content Match Validation"). |

This gave users a mental model of what the AI was doing behind the scenes, reducing perceived wait time and preventing premature abandonment. The conscious choice to name the process — not just animate it — reinforced the system's credibility at a moment when trust was most fragile.

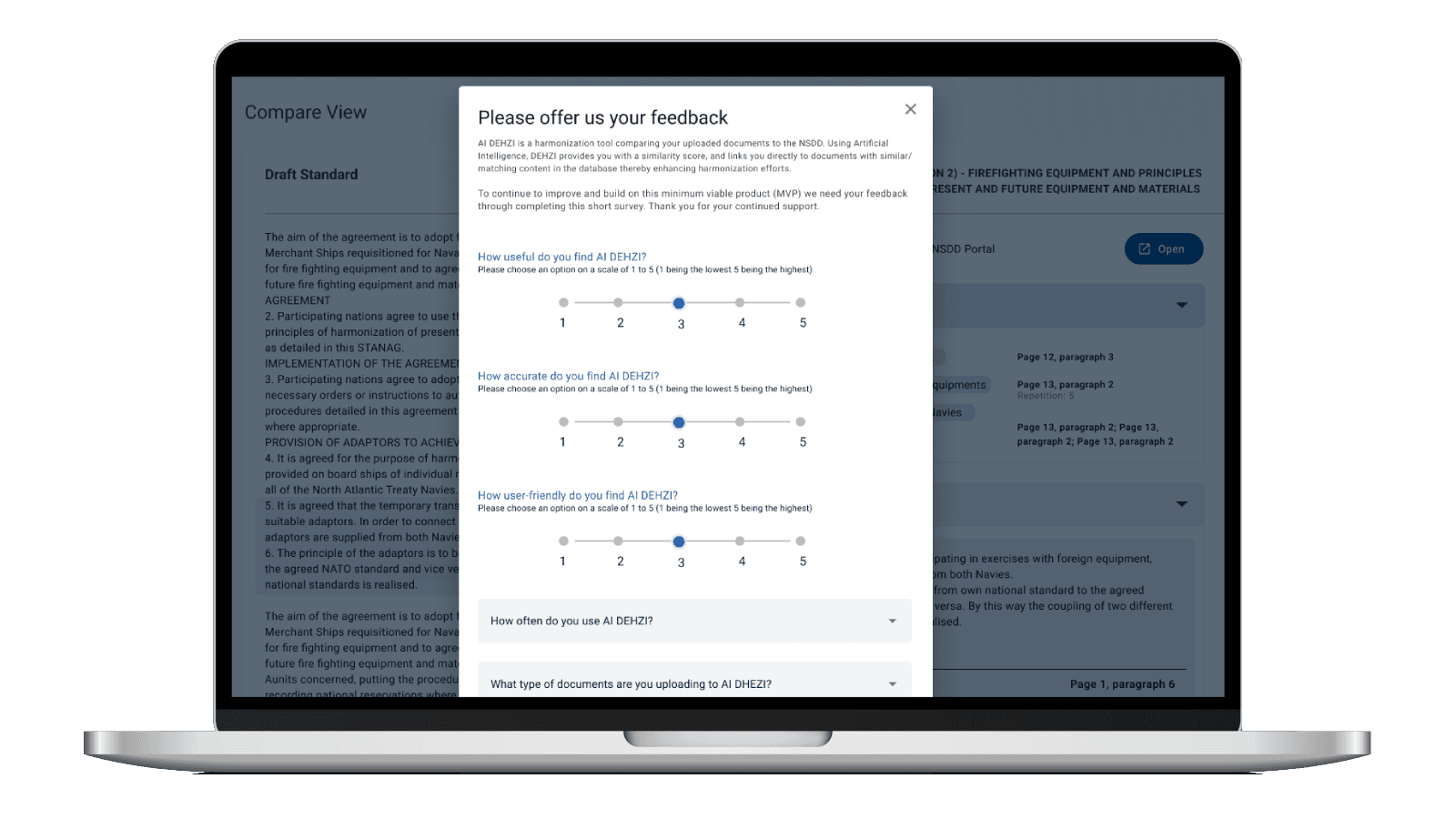

Continuous Feedback & Iteration- Embedded Feedback Loops

I designed multiple feedback points:

Inline result validation

Post-task feedback prompts

Export usage tracking

Based on Real Usage, we reduced noise in “similar subject” results, Improved contradiction detection clarity, and adjusted scoring thresholds to better reflect human judgment

This created a living system that improved through actual operational use.

Outcomes

User Impact: From Data Fatigue to Strategic Auditing

Reduced document research and cross-referencing time by 50% (expressed as "by Half" by users) transforming a multi-day manual process into a focused, minutes-long verification task.

Eliminating Cognitive Load by replacing unstructured manual queries with AI-categorized semantic matches, allowing custodians to focus on high-level decision-making.

Increased the reliability of standards harmonization by providing clear, exportable "evidence logs" that justify every change to leadership.

Organizational Impact: Setting the AI Standard for an organization

Successfully demonstrated that AI's greatest value in defense is as a trusted decision-support tool, shifting the internal culture from skepticism of "black box" automation to adoption of "Augmented Intelligence."

Established the foundational Human-in-the-Loop framework now used by the NATO Innovation Hub to vet and design future AI-powered initiatives.

AI UX is System Design, Not Screen Design Designing for AI requires moving beyond the interface. A Senior Designer must understand the data pipeline, the model’s confidence thresholds, and the technical constraints to design a truly cohesive experience.

Trust is Built Through Structure, Not Claims You cannot "decorate" an AI to make it trustworthy. Trust is an architectural outcome achieved through transparency, source-verifiability, and consistent feedback mechanisms.

Designers as Model Architects I learned that a Designer’s most valuable contribution to AI isn't the UI—it’s influencing the model behavior. By defining what "relevance" looks like for a human, we directly shape the prompt engineering and re-ranking logic.

Other projects

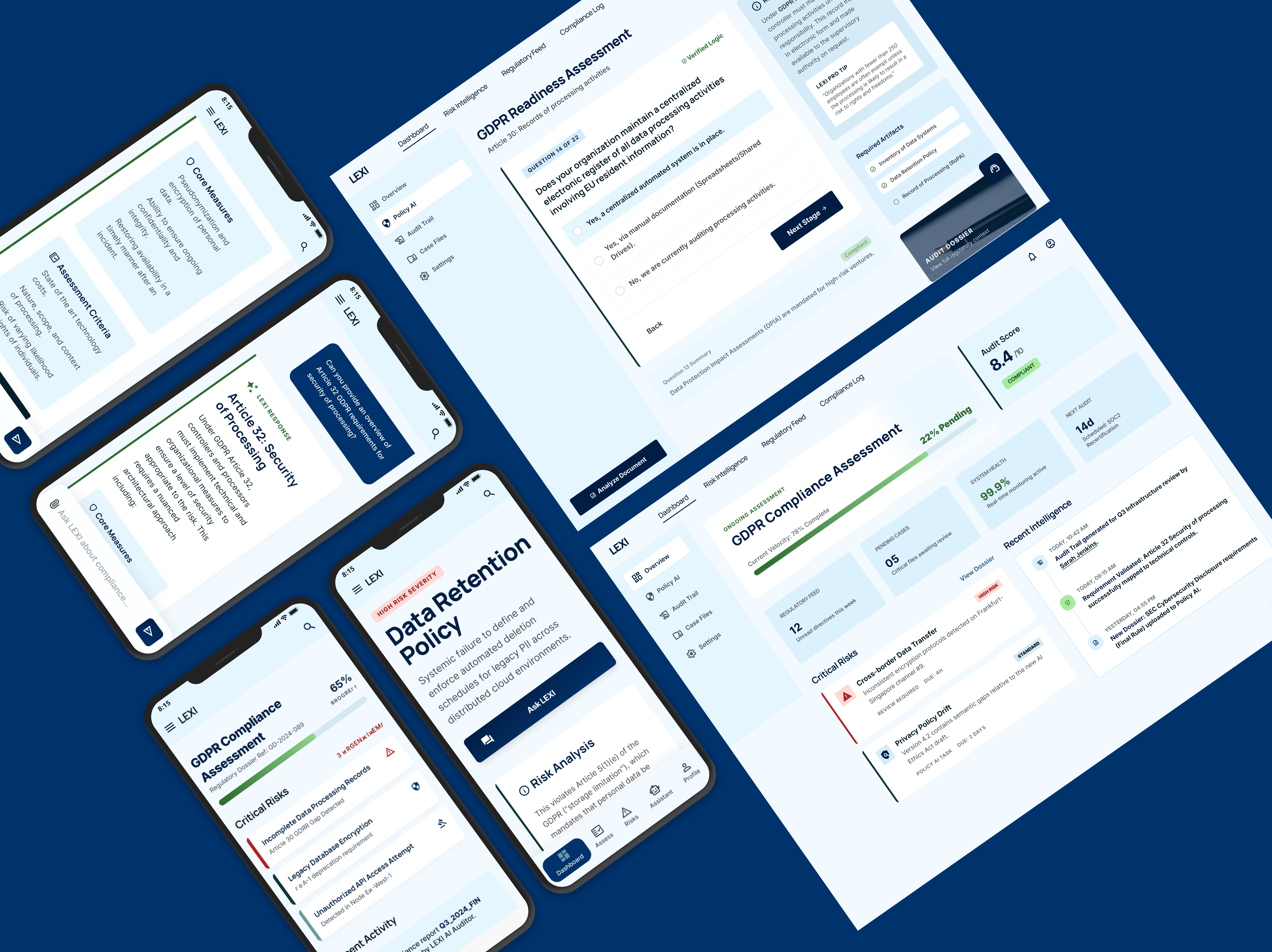

Lexi - the AI Compliance Assistant

Designing a decision support system in the form of a chat based AI application that helps non-legal teams navigate GDPR risk efficiently.

A Strategic Redesign of the MyAccount Shopper Platform

Transforming a legacy shopper portal into a streamlined self-service hub that reduces support overhead and empowers global users.